The Measurement Problem

Organizations have tried to measure safety culture and engagement for decades using surveys and lag indicators. These tools tell you how people say they feel or what already happened, not whether thinking is occurring at the point of work. Safety culture surveys measure what people believe. Artifact counts measure what paperwork exists. Neither measures whether cognitive engagement is actually occurring before exposure to risk.

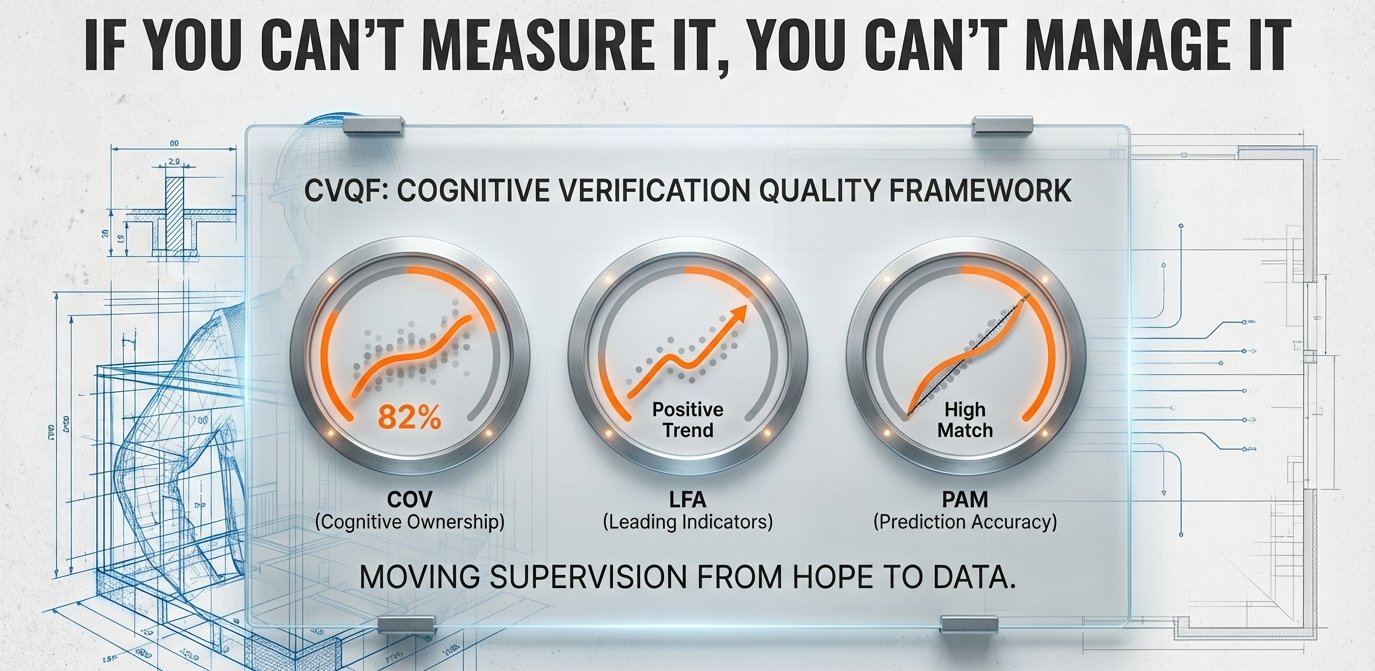

The Cognitive Verification Quality Framework solves this. For the first time, you can measure cognitive verification quality in real time.

This is not a frontline metric. Workers do not fill out forms about their conversations. CVQF is a leadership discipline. All measurement burden sits with leadership and support functions.

The Four Indicators

COV: Cognitive Ownership Verification

COV tracks whether workers are taking genuine cognitive ownership of the task or simply repeating what they were told. There is a measurable difference between a worker who has mentally processed the hazards and conditions and a worker who is echoing supervisor language. COV makes that difference visible and trackable across crews, sites, and time.

LFA: Leadership Focus Assessment

LFA reveals what the organization actually values by tracking what leaders ask about first when they approach work. The first question a leader asks signals the entire system's priority. LFA tracks whether that priority is documentation or cognition, and whether it is shifting over time.

PAM: Predictive Accuracy Measure

PAM tracks the gap between what workers anticipated before a task and what they actually encountered during execution. When pre-task cognitive verification is working, that gap narrows because workers have already mentally simulated the task in current conditions. A widening gap reveals that pre-task conversations are becoming routine rather than genuine.

IVC: Investigation Verification Check

IVC closes the feedback loop between incidents and pre-task verification. It tracks whether cognitive verification actually occurred before investigated events and what it produced. Over time, IVC data reveals patterns about when and where cognitive verification breaks down, giving leadership actionable insight rather than retrospective blame.

The Sustainability Finding

Field observations across multiple implementation sites reveal that approximately 40% of sites sustain the method long-term while approximately 60% experience degradation within 18 to 24 months. The determining factor is not training quality, supervisor motivation, or organizational culture. The determining factor is measurement discipline.

Sites that maintain CVQF indicators on executive dashboards sustain the practice independent of external support. Sites that remove CVQF from dashboards during "simplification" initiatives or revert to artifact-based metrics experience predictable degradation regardless of supervisor motivation.

You get what you measure. If you measure forms, you get forms. If you measure conversation quality, you get thinking.

Implementation

The specific collection methods, scoring protocols, diagnostic interpretation criteria, and dashboard integration for each indicator are part of the implementation engagement. CVQF is designed to embed into your existing operational rhythms without adding documentation burden to frontline workers.

Explore the Interactive Assurance Level Architecture. See how the four indicators integrate with your operational systems.

Next: The ABCD Framework. The implementation pathway that includes CVQF integration.